From the Experts:

A Guide to Data in Digital Asset Management

Listen to Podcast Episode

Stay in touch for new content

Authored by:

Madi Weland Solomon,

Principal Consultant, ICP

What makes for data maturity? In digital asset management, it’s mostly about understanding the larger context of the data ecosystem, its key dependencies, and identifying key success measures. We are inundated with data and this deluge can quickly turn to noise if it is not managed and maintained. In order to achieve this, data quality and data relevance checks must be managed continuously. In this chapter, we provide a head start on the many ways to keep your data healthy, usable, actionable and measurable.

Data Maturity: Playing Well With Others

Data maturity is never completely achieved. We may think we understand the impact and importance of data, but there are so many ways data can be queried and used that harnessing it is only limited by our imaginations. Data is a window into the culture of the business or organization, and there is no one-size-fits-all.

Data is a churn. It grows and morphs and requires a continuous commitment to maintain its quality and effectiveness. Talking about data is incredibly boring, but clean, standardized data that is machine readable has the power to sustain the most successful companies in the world.

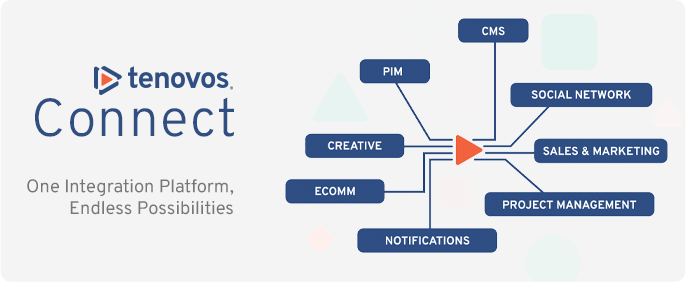

Making sure the DAM is ready for integrating and interacting with other systems with an eye towards omnichannel distribution is a good sign of data maturity. Like all growth, realizing the DAM is not a stand-alone system, but dependent on other data sets, is the first step to ensuring your data will play well with others.

Understanding the digital ecosystem

Breaking out of siloed systems to create a digital ecosystem is the goal for omnichannel distribution. What is omnichannel? Omnichannel is the creation of an interconnected experience across multiple systems and repositories that provide customers with seamless services regardless of what channel they prefer. It is the separation of Content and Form.

Consider this: a book can now be distributed online, as an audio book, a hardback copy, or printable PDF. The content remains the same, only the form is variable. With marketing materials, content will take the form of the known customer’s preferences. Omnichannel integrates multiple, dependent data sets that can be configured to automate personalized distribution. Instead of having the customer find the products, the products find them.

Digital maturity in the omnichannel environment is awareness of the data dependencies across multiple platforms such as DAM, Product Information Management (PIM), Customer Data Platform (CDP) and marketing content collateral in a Content Management System (CMS). Specific data is managed in different systems but must leverage the info and data from the others to successfully automate delivery.

How to get your data to grow up: Preparing for future integrations

- CX Strategy: When preparing for omnichannel and automated personalization, a Customer Experience (CX) strategy is a good place to start. Identify the kinds of experiences you think are right for your customer. This can be as creative as your specialists can imagine, and may involve several layers of interactions that can track a customer’s journey from first-time buyer to brand advocate.

- Data Strategy: Once the goals of the CX are defined, a data model will be developed with actionable levels of detail that can be implemented across all the dependent systems. Not all systems have to have the same metadata, but must be aware of their part in the overall orchestration of data. It only takes one non-compliant section or system to derail the whole strategy. Understand where DAM fits into the content delivery strategy.

- Activate omnichannel by integrating traditional and digital channels, scaling with automation where possible, and responding to data insights from customer interactions.

Data visibility

Data maturity is understanding that your data will be scrutinized, visualized and monetized. Folks will be taking a good look at it so be proud of your data. Beautifully expressed data in the DAM should invite innovation and become a signature of your work.

Keeping Data Relevant and Useful for You and for Others

Maintaining data integrity and ensuring its relevance is a sign of data maturity. In this section we’ll go over the many approaches to helping your data evolve with continuous data improvements.

DAM Ways of Working health-check

It is recommended to conduct DAM Ways of Working health-checks once your DAM is up and running. The frequency of these health-checks will depend on the pace of engagement from your DAM users. Good data is dependent on good processes, so validate that these are working to expectations.

- User feedback and root cause analysis: Are data imports accurate and relevant? Talk to the people who are managing the data in the DAM. Are they importing master data from another source? Is the data accurate? Work with them to identify the root cause and help them correct it.

- Is metadata being applied to the right assets in the right sequence? Is anyone quality checking the metadata? Be sure that users are up to speed on DAM training and are informed of the requirements for uploading assets to the DAM.Governance and accountability: Make data quality assurance a must have.

- Building a governance plan for data can take many forms, but it is essential in setting up parameters for quality assurance. Data governance might include a governance body, or it might take the form of data requirements that must be complied with before proceeding to the next workflow task. Data maturity relies on good governance and standards.

Keeping Terminology Relevant

Taxonomies are living structures and must be updated on a regular basis. The language that we use to describe assets and content change with current trends and cultures, and these should be reflected in the taxonomy. To keep your taxonomy up to date, bi-yearly health checks are suggested.

How to health-check your taxonomy?

Use these common taxonomy maintenance tasks to keep relevant

- Truncate or deprecate: antiquated and outdated language. Go through the analysis of the terms that are most used in the taxonomy, and the ones that are rarely used. This is a first step in knowing where to begin when cleaning up the taxonomy. Remove the terms that you anticipate will never be used by your organization and keep the taxonomy as light as possible. Try to keep the hierarchy no more than three levels deep. Broad and shallow taxonomies are the easiest to manage and maintain.

- The unattended taxonomy: It is not unusual for a non-taxonomist to be assigned to manage the taxonomy. This usually results in new terms being added to the taxonomy that are not properly placed in the relevant classifications, creating a growing pool of floating terms that have no relationship to the structure of the taxonomy (parent/child, related terms, variant terms, etc.). Keep your top classifications clean and place the floating terms in their relevant category. If you cannot find the category, then create a new classification that may grow in time. It is up to you whether or not to accept a “Miscellaneous” category, but we taxonomists are collectively against it, citing a lack of resourcefulness if you can’t create a better classification.

Outsourcing taxonomy and tagging

Using third-party services to quality check and add metadata is a viable solution but be aware that this will take considerable oversight. Different cultures have their own vernacular and don’t always tag according to the cultures that will be viewing and searching for the assets. A good example is the difference between US and UK nomenclature. Have you eaten a courgette lately? How about searching for women’s pants? These terms have different meanings in different cultures. Be aware that those outside the culture will perceive the references in digital assets through their own perspectives and will need to be prompted to add the relevant synonyms.

Taking hostages: taxonomy dependencies

As you mature in your data capabilities, the realization that taxonomies can validate machine learning will come into play with algorithms and advanced Boolean techniques to build rules within the DAM. This means that you can begin to automate searches to return a specific set of concepts, subjects or topics. Once you do this, however, you build a dependency on the terms existing in the taxonomy. Should this change, as language often does, it will impact any rules that were created using that term. Go lightly into this realm, dear friends, but do not be daunted. Often, complex Boolean inquiries have a broad stroke of terminology and the rules will be covered by other metadata terms. Never accept stagnation out of fear.

Metadata health checks: any new elements needed?

Just as language and nomenclature evolve, so do new data perspectives. Metadata elements will need to be added as your organization evolves. There probably was a time when you considered metadata only as asset descriptors. Now your organization has matured to understand that you may need to incorporate customer behaviors or communication tone as new metadata elements. Be flexible and expand the datafication of all things that need to be actioned, measured, and maintained.

Data Audits: Know Your Data

Managing and maintaining data maturity requires deep knowledge of your data. That seems obvious but merely looking at it and nodding your head doth not knowledge bring. Periodical data audits are an excellent way to understand whether your data is being used, or not. You may discover some work-arounds that avoid compliance or that embed secret codes that only a single market might use. These clutter up the database and part of your job as a data steward is to make sure that the data fulfills the needs of the user. The first task of any data audit is to listen to your key stakeholders.

Understanding the data stakeholders

Getting to know your data stakeholders isn’t for policing or reviewing their data habits. It is to better understand how to interpret what they need from a machine. In the next chapter you will learn about machine learning and artificial intelligence, and they both rely on humans to program the machine. By understanding the needs of your stakeholders, you can bridge the gap between your users, and the developers and data scientists who will configure the systems to help people do their job well.

Identifying where the data is stored

Discover where your data is stored. It might be in the cloud; it might also be represented in an on premise data repository. Map out the data so that you are aware of where it is, how it is represented, and where it travels. If data is stored in multiple places, do you have a communications plan in place for change requests or updates that might occur? In a mature data environment, a centralized shared taxonomy is often used for tagging assets and content to help keep all dependent systems in sync automatically.

Conducting a data health check within the DAM

It is recommended to conduct regular health checks in your DAM to ensure data accuracy. Check the system analysis of the search terms used, which metadata elements are being populated, and which ones are usually left blank. Here is a quick checklist of what more to explore:

- Missing data or null fields. Are there important metadata that are missing which are hindering search and retrieval? Important metadata fields such as Asset or Content Types and Format are essential descriptive data and critical for future automation. Discover why the fields are missing and try to find a way to remedy the situation.

- Incorrectly formatted data, such as wrong date notation. In a global company, you’ll find different data standards that may prove confusing. Date notations, for example, are different in the US and UK. These differences must be acknowledged and configured to a common standard such as the ISO date format of YYYY-MM-DD.

- Data generated by bots (for instance, through a contact form on your website) or chat bots that help users find what they are looking and store automated responses are generally helpful. However, there are other bots that are malicious and can hack into your data and exploit it. Be aware of the bots that are in play in your organization and be on the look-out for any anomalies. Contact your Data Scientist or IT representative immediately if you have any suspicions.

- Wrong data through human error, such as keying errors, misspellings, or omitted words. To err is human. When you discover some consistently wrongly tagged assets, consider using the taxonomy to map common misspellings or acronyms as synonyms. Alternatively, you can program the DAM to suggest an autocorrect with the errors. These errors will surface in regular data audits.

Measuring DAM ROI

There are several ways to approach DAM Return on Investment (ROI) and it’s a good idea to set up regular reporting cycles at the beginning of the deployment. As the DAM gets rolled out with more users and markets, the returns will continue to grow and increase. Here are some examples of the kinds of data to collect for success measurements.

Quantitative: metrics through measurement

- How many assets were downloaded and repurposed? This number will help you quantify the amount of time spent in 1) finding an asset and 2) re-using and repurposing an asset. Often, out of frustration at not being able to find what they’re looking for, creative teams will simply re-commission work from an agency to meet a tight deadline.

- Cost Avoidance: Looking at the downloads over a period of 1 week or 1 month, a cost avoidance calculation can be determined against the typical agency fees for creating new assets vs. cost to re-use or re-purpose existing assets. If, in a typical period, 6 different creative assets that were previously commissioned such as creative pack shots, eCommerce product images, or in-store guidelines for example, but have now made assets more easily retrievable and repurposable, you may be able to calculate a weekly or monthly savings of many tens or hundreds of thousands of dollars.

- Brand protection. With good rights metadata, brands can avoid mitigation of asset misuse. Infringement costs can be significant (in the millions) and having clear guidelines and a well-managed integrated DAM ecosystem can reduce legal costs by 20%.

- Sales impact. This is determined by data analytics and customer behaviors. Establish a means for measuring the impact of marketing campaigns, social media contributions, seasonal sales figures for example, to determine which assets had the most impact on sales. These insights can be shared with the Customer Relations Management systems and/or Customer Data Platform and can be used to inform future planning.

- Impact on Marketing KPIs such as better click-through rates, measurable links to performance of specific assets, and offering feedback and insights result in better media spend calculations.

- Brand consistency. Increasingly, shoppers are no longer relegated to a single store to purchase their favorite products. In an increasing world of online shopping, consumers shop around for the best deals from multiple sites. Ensuring a seamless and consistent brand experience, regardless of the distribution channel, will keep customers engaged throughout every stage of the sales life-cycle.

Saving time, metrics through improved efficiencies

Reporting on the amount of time and effort that have been saved with a DAM relies on two success factors:

1. Dedicated Global Support Team who are available for change management, training, and basic on-boarding. Someone to be there should a user need help using the DAM.

2. Improved metadata facilitates discovery and findability. Considering that many companies still rely on shared folder structures on internal servers, a significant amount of time is wasted searching, looking, digging, and finally asking Patty-who-knows-where-everything-is to finally point you in the right direction. The metadata approach to DAM makes it far faster and easier to retrieve and quickly reuse assets. Good metadata and training is key.

- In a recent audit, ICP found that an organization saved 63% of their time through the use of good training/onboarding and reliable metadata by measuring the ratio of searches to assets downloaded to the searches made.

- Reduction in duplication. This cannot be emphasized more as business units and markets re-commission or recreate work that may already exist, but are unaware of its existence. Once business units and markets begin to upload their assets into a shared DAM, duplication is often exposed and actions can be taken to reduce myopic ways of working.

Empower Innovation with Good Data

Data maturity is smart data. It doesn’t just describe an asset. It provides context to the business domain, it can be used for automation and personalization, and it can measure success through tracking content performance and asset reuse. It can streamline operational efficiencies and unify the enterprise in a cohesive ecosystem where workflows can be leveraged across platforms.

Data aesthetics in data visualization is an emerging field that will continue to quickly evolve to provide humans with beautiful graphs and interactive modules to help them understand patterns found in large data sets. Approach your data as a DAM best-in-breed and help prepare your organization for future innovations.